Everyone and their dog have shared their opinion on the recent Google AlphaGo commotion of AI beating Fan Hui, a pro player, and its upcoming match against Lee Sedol. As an avid Go player, as well as a machine learning practitioner with a long history of programming game AIs, I have a different perspective on what AlphaGo’s victories ultimately mean and why you should care.

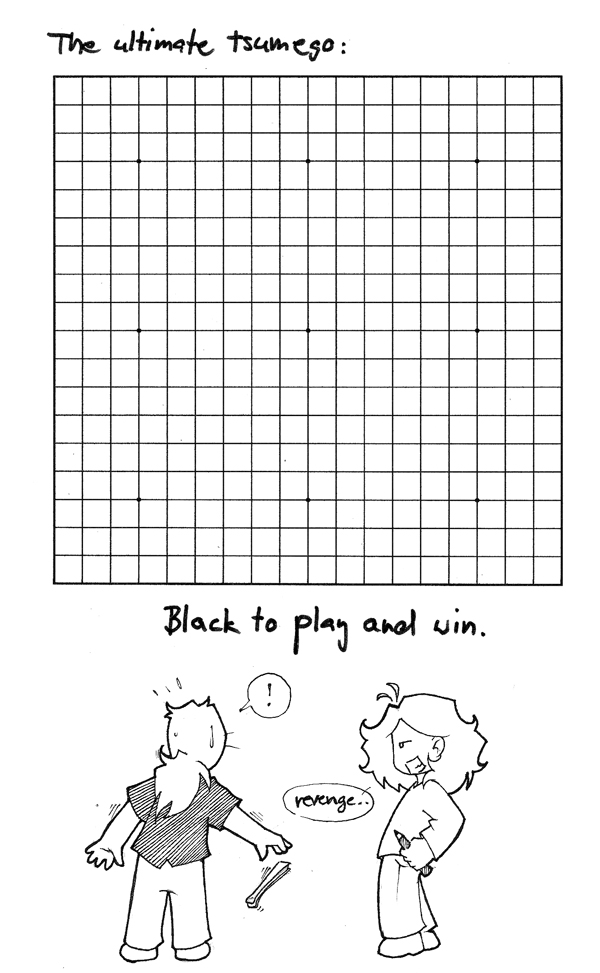

The ultimate Go problem to solve. Image courtesy of the awesome emptytriangle.com.

I don’t intend to rehash the usual points: Go is hard, computers are fast, deep learning cool, SkyNet around the corner, yadda yadda. Mostly true, but only relevant here to the microcosm of one obscure, nerdy game. I love Go, but the honest question is: who really gives a shit about Go computers beating Go humans? It’s been done in countless other games before. How is this a “victory for humanity”?

In this blog post, I want to argue for a different angle that most journalists miss. Go exhibits some unique properties that make it stand apart. And I don’t mean in the usual sense of “Can the particular algorithm from AlphaGo be used to solve other, more immediate business problems?” (it can, approximately to the degree IBM’s DeepBlue and Watson were business successes…).

Rather, I mean more simply and directly: what will be the high-level strategies that AlphaGo discovers in Go? Because to the degree we (humans) can identify and name those strategies and concepts in AlphaGo’s play, they could be immediately transferable to real life situations, outside of Go. It’s not easy to see this connection between an obscure desk game, played with black and white stones on a 19×19 board, and anything “real”, but that’s exactly what I want to argue here.

Getting the theory out of the way

But first, a few basic facts, just to be on the same page:

-

The game of Go is trivial from the formal perspective. Only two players, perfect information (everyone knows the entire state of the game at any time, nothing hidden, no randomness), discrete play (players take turns), finite state (only a limited number of well defined possibilities where to play next). We know a winning strategy exists (Zermelo’s Theorem): either the first player to move (“black”) can always force a win, or the opponent (“white”) can.

In a way, Go belongs to the safest, most boring type of formal problems you can define.

-

Go is a challenging game from the practical perspective. We know a winning strategy must exist (a solution to Go, effectively), we just don’t know what it is. Go therefore counts among unsolved games, along with chess, for example.

There are simply more possible board positions in Go than there are atoms in the universe (such enormous! much numbers!). By the way, little known fact: both checkers and gomoku (five-in-a-row) have already been solved, despite also exhibiting this exponential growth in their theoretical state space.

On that note, this disconnect between “knowing a solution exists” vs. “having a practical way of playing” will be immediately familiar to mathematicians (existential vs. constructive proofs) as well as programmers (algorithmic complexity). I’d invite all theoretically minded readers to check out Scott Aaronson’s Why Philosophers Should Care About Computational Complexity essay — an entertaining blend of quantum computing, economics, cryptography, machine learning and philosophy.

-

Go is my hobby. I love the game, though I’m only about an amateur 1 dan.

Playing at a Go club in Saigon (left).

Additionally, I’ve gotten into the machine learning field by means of programming commercial game AIs. Gomoku was the first AI I seriously tackled, and the final program never lost as black. The feeling of losing to one’s own bot is therefore nothing new to me :) Fun fact: my github handle, piskvorky, means simply “five-in-a-row” in Czech.

-

Google DeepMind’s AlphaGo computer bot beat Fun Hui, a Chinese professional player and the reigning European champion, last October (though they kept it hush hush until their publication in Nature this January).

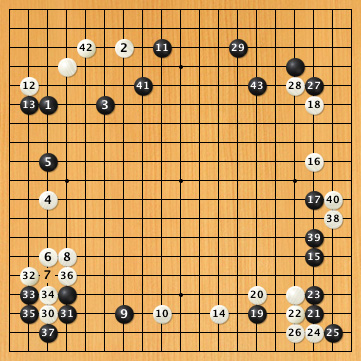

AlphaGo’s victories have been covered extensively: see AlphaGo on the front page of Nature; thoughts on the cognitive aspects and hybrid, symbolic systems by Gary Marcus; Miles Brundage on the software and hardware progress of Go AIs; analysis of AlphaGo’s games by a MyungWan Kim, 9 dan Go professional; technical prognosis for the upcoming Lee Sedol match etc etc.

To put this in a practical perspective: previous Go bots had been winning against worse players (myself included) for some time now; future bots will be beating stronger humans still. Possibly all humans, and possibly quite soon. A showdown between AlphaGo and Lee Sedol, one of the world’s top Go players, is starting next Wednesday, on 9th March 2016. Fun rumour: Google hired Fan Hui, the defeated Euro champ from October, to train AlphaGo for this match.

Obviously, the world of Go is abuzz, excitement off the scales. Lives of professional and casual players alike are about to change forever.

The era of snobbish snickering at chess is coming to an end.

But sensationalist journalism and hurt egos aside, Go AI reaching and surpassing human levels is hardly a surprise to people in the field. As Garry Kasparov put it: “the writing is on the wall”.

So, unfazed by AlphaGo’s victories and without much hope of seeing Go rigorously solved in my lifetime, I am nevertheless super excited about these advances. The reason is a little more subtle though — it’s due to the intrinsic nature of Go as a game.

Go as mirror of life

Games are a simplified reflection of certain aspects of our life. Their evolutionary appeal and ultimate purpose is as simulations: risk-free training for situations that are too expensive to act out in the real world. Go is extraordinary in this respect. It has survived thousands of years, a testament that it captures something rather fundamental to human existence. What insights can we draw from Go?

The earliest surviving recording of a Go game. The Wu prince Sun Ce plays his general, Lü Fan (c. 190 AD).

This is of course not a new insight (which is why I’m surprised I don’t see it mentioned more often in AlphaGo’s press coverage). For example, the U.S. Army’s Strategic Studies Institute has explored this angle thoroughly, in their Learning from the Stones: A Go Approach to Mastering China’s Strategic Concept of Shi:

In this monograph, the author uses the ancient game of Go as a metaphor for the Chinese approach to strategy. He shows that this is very different than the linear method that underlies American strategy. By better understanding Go, he argues, American strategies could better understand Chinese strategy.

How is that for a different angle on AlphaGo victories?

That is the core appeal of Go; it provides strategic insights that made it survive millennia, virtually unchanged. Everything about the game reflects this: from its terminology (life&death problems, influence and initiative, sacrifice, connecting groups, dormant potential) to Go proverbs. To name a few: “your enemy’s key point is your key point”, “a rich man should not pick quarrels“, “make a feint to the east while attacking in the west”, “make a fist before striking“, “urgent before big”, “play away from your own strong positions”, “worse than kill, let your opponents live small” and countless others.

With Go, there’s a remarkably clear connection between these hand-wavy generic adages and their execution. The effects of Go on cultivating the strategic mind have been recognised as one of the basic four virtues of the Chinese scholar.

“When starting, the best strategy is to spread the pieces far apart and stretch them out, to encircle and attack the opponent, and thus win by having the most points vacant. The next best strategy emphasises cutting off the enemy to seek advantage. In that case the outcome is uncertain and calculation is necessary to decide the issue. The worst strategy is to defend the borders and corners, hastily building eyes so as to protect oneself in a small area.”

These are remarkably astute Go (and life) tips, and sound advice even by modern standards. They were written down by one Huan Tan, around 5 BC (!). A fascinating window into the thoughts and strategies of people from around the time of Jesus.

(A blasphemous thought: how much did these words of early Go masters influence the two millennia of Go that came since? Are there other, perhaps superior strategies, along the lines of the unorthodox “black hole” Go opening popularised recently?)

People playing Go, Ming dynasty (15th century).

There are no “special” pieces or funky moves in Go; all stones are exactly the same, with the same intrinsic value. To the extent your stones gain advantage over your opponent’s, they do so by means of their mutual interconnectedness, and not their individual nature.

Go gave us beautiful art, inspired poets, motivated anime, incited modern anarchists, libertarians, communists, religious folk and mystics alike. But I don’t mean to wax lyrical about Go’s beauty and elegance. When pragmatic, explicit analysis clashes with vague hunches and fuzzy intuition, it’s always a victory for the cold hard reality.

And this is precisely what excites me about AlphaGo. I don’t care much if it beats all humans (I agree with Kasparov’s assessment — the writing is on the wall for Go).

I do care though if a new pattern of playing emerges. Shedding human preconceptions and cognitive crutches, like “sente” or “ko”. Something on the level of re-inventing the concept of “sente”, or “corners first”, or “to attack West, lean against East“… only different, more advanced. And by analogy, I’m excited by the prospect of applying these new concepts in other situations, outside of Go, in real life circumstances.

Can AlphaGo deliver?

Advances in computer Go are not about whether bots can play better than humans. That question is about as interesting as whether airplanes can fly faster than birds.

Rather, I’m excited about whether, in the process of surpassing us, computers can teach us something surprising about Go itself. And, by virtue of Go’s remarkable life-mirroring qualities, about our everyday lives.

A wise man once said, “The purpose of computing is insight, not numbers”.

To me, the promise of Go AI is delivering insight, not victories.

RARE Technologies

RARE Technologies

Comments 8

Well written & good to see you still play go 🙂

I also liked the links in “Go gave us beautiful art, inspired poets, motivated anime, incited modern anarchists, libertarians, communists, religious folk and mystics alike.” They are all new and fun to check.

Author

Hello Steven! Yes, still playing… as time permits 🙂

Loved your post and the insight! I have to agree with you in being excited about new ways AlphaGo might discover about the way Go can be played differently – but will it be able to do this if it is training with (and learning from) humans?

Author

Thanks! And good question 🙂

My 2 cents: not with the current AlphaGo architecture.

There is a reinforcement learning component, but it doesn’t seem prominent enough to force that level of creativity. It’s just not possible to “dream up” enough self-plays to overcome the accumulated millennia of human knowledge, in such a short time.

But if, after this match, DeepMind tweaks AlphaGo’s knobs to be more daring, to ground its training less in historical human matches and more in exploration of Go from first principles… who knows?

I’ll be looking forward to those tweaks then!

Author

It’s becoming a reality!

Denis Hassabis, the founder of DeepMind, says they’ll train AlphaGo purely from self-play next! Yay 🙂

business idea :: books have been written about chess and game of life … have you considered writing about ‘What Go teaches us about Life’ ?

Author

I wish I could live for a thousand years, doing all the things I find fascinating!

I believe there are people better qualified to write that book, so I’ll focus on other stuff 🙂