Some of our consulting tasks keep on repeating, hinting at a wide-spread pain point across our clients and industries. One of them is looking for meaningful nuggets of information in large unstructured document databases. How do you extract actionable insights and relationships from messy datasets, such as Customer Support records? How about financial reports, or job CVs? Are you still guessing, hoping to match keywords, ignoring the last decade of research into machine learning and information retrieval?

As you may know, we’ve built ScaleText.com to address this need. ScaleText lets businesses automatically organize domain-specific documents, break them down into actionable pieces of information (which we call “nuggets”), and search by semantic similarity and implicit semantic meaning, to discover the information that matters.

One of the key pieces of technology we needed for ScaleText was an efficient vector indexing and search. Academic lingo aside, can you imagine the headache of deploying and maintaining yet another highly-specialized software engine, on top of all your existing infrastructure? We quickly realized that Ops, maintenance and security are a deal-breaker to real businesses with real, sensitive, mission-critical data.

ACL was a blast: lots of amazing people, discussions and suggestions for ScaleText. Vancouver is quite a way away from our HQ in Prague, but well worth the trip.

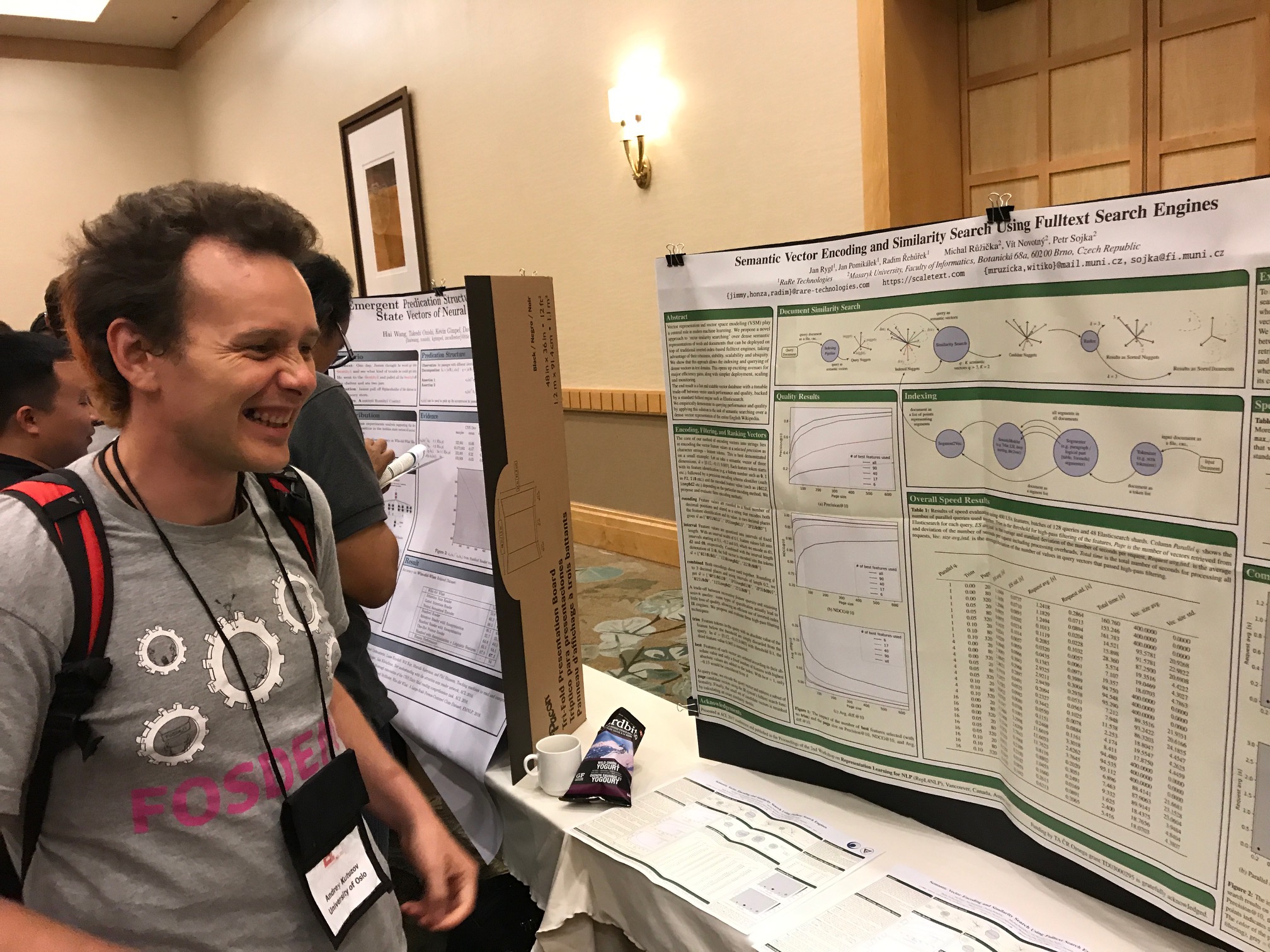

Today, we’re happy to announce our paper on practical large-scale vector indexing and semantic search was accepted for the 2nd Workshop on Representation Learning for NLP at ACL 2017! Prof. Petr Sojka and Vít Novotný, our research partners from the Masaryk University in Brno, presented this paper in Vancouver at the beginning of August.

The paper is called Semantic Vector Encoding and Similarity Search Using Fulltext Search Engines and basically describes an automated method to do vector similarity search over a traditional fulltext engine. Do you have a fulltext system such as Elasticsearch, Solr or Sphinx in place already? Then applying ScaleText is seamless — our technique allows advanced semantic action right on top. With some clever use of vector recoding, we can quickly process extremely large collections, piggy-backing on your existing production-grade fulltext setup. Including machine clusters, sharding and data replication!

If you’re interested in the technical details, you can read our paper here: PDF. We’ve also patented this technology with the USPTO (no evil intentions there, but IP protection is a sad reality of today’s world).

For more info, feel free to ask for a ScaleText invite or drop us a line directly at [email protected].

RARE Technologies

RARE Technologies