Last month, I gave a keynote at PyData Warsaw about the existing (and growing) gap between academia and industry, specifically when it comes to machine learning / data science. This is a topic close to my heart, since we’ve operated in that no-man’s land where academia and industry collide for a living for 7 years now. Between running our Student Incubator, creating open source software and cutting edge R&D work for companies like Amazon, Hearst or Autodesk, what are the lessons learned?

“Winning together: Bridging the gap between academia and industry”. PyData Warsaw conference, October 2017; slides here.

The Mummy Effect

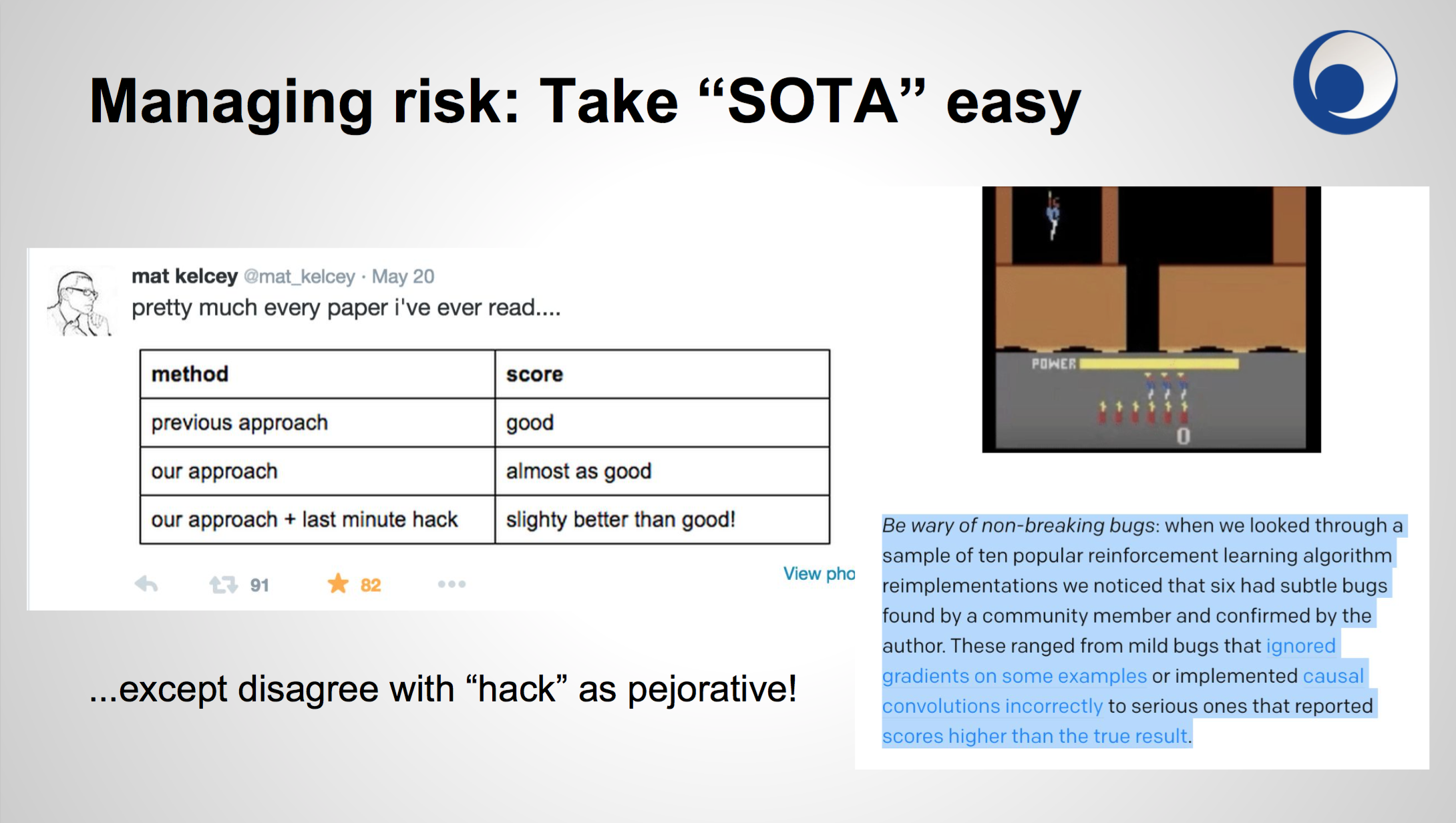

One frustration is with what I call the “Mummy Effect”: a research paper looks amazing, leaves all previous SOTAs crying in the dust. There’s a healthy buzz, perhaps even a big name / research lab behind it. You’re eager to try it out, put the results into practice.

And then you implement this exciting technique and discover all the corners the authors cut, unspecified critical parameters, the carefully omitted assumptions. That spectacular example mentioned in the paper? Change the number of epochs or the batch size slightly, and you get complete garbage output instead. The whole elaborate thing crumbles to dust on touch — like an Egyptian mummy.

We’ve experienced the Mummy Effect enough times to know it’s the norm, rather than the exception. From “solved” problems like language identification to high-dim vector search, to topic modeling, to topic modeling, the current deep learning craze… you name it. Careful engineering and analysis replaced by bombastic PR and fancy formulas obfuscating the substance.

The reason is primarily due to misaligned incentives, which lead to hidden risk (or, to put it more favourably: allowing others to discover the risk for themselves) and a troubling lack of ownership.

But repeating the magic mantra of “do repeatable research!” or “just publish the bloody code, you nincompoops!” is not helpful. Too generic, plus these are smart people (on either side of the gap) who understand the situation already. You don’t have to point the Mummy Effect to them explicitly, they know – it’s by design.

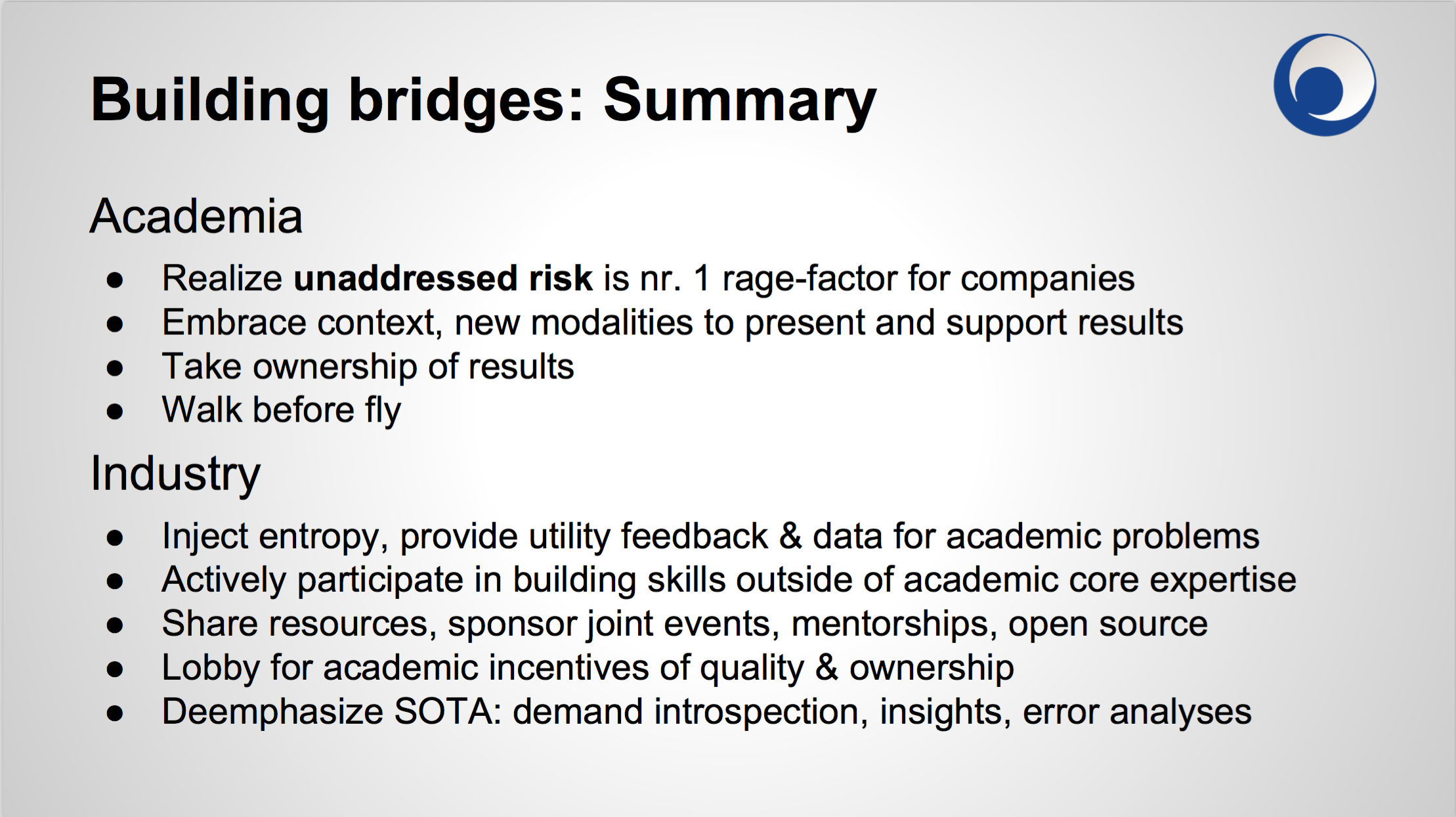

Building Concrete Bridges Between Academia and Industry

My thesis is that there are concrete, actionable things people from research and industry can do to make each other’s life easier. People typically look up to governments and mega-corps for top-down solutions, waiting for them graciously drop a few crumbs off their table.

I personally am a firm disbeliever in “salvation from above”: it feels both impotent and misses the many pragmatic, actionable things individuals and small companies like ours can do now, that ultimately add up to a more open, sustainable, bottom-up progress. People in cushy government / mega-corp jobs tend to have too little skin in the game, so to speak.

I call these things “bridges” and offer a few horror stories in the talk, based on our experience of straddling this gap for seven years now. We do our best to bridge it with our academic partnerships, free Student Incubator and open source software on one side, and building kick-ass machine learning systems for companies like Amazon, Hearst or Autodesk. (We like to finance all that “bridging fun” from our commercial activities, rather than force the costs on innocent bystanders via public taxation, but that’s a topic for another post.)

- The bridge of risk: Are you looking to make things more predictable, replaceable, orderly (business) versus striving for the novel, unique and magical (artists, craftsmen, adventurers)? One of the two primary sources of friction and misaligned objectives. When businesses and engineers rage at academic “ivory towers”, they typically rage at unaddressed risk.

-

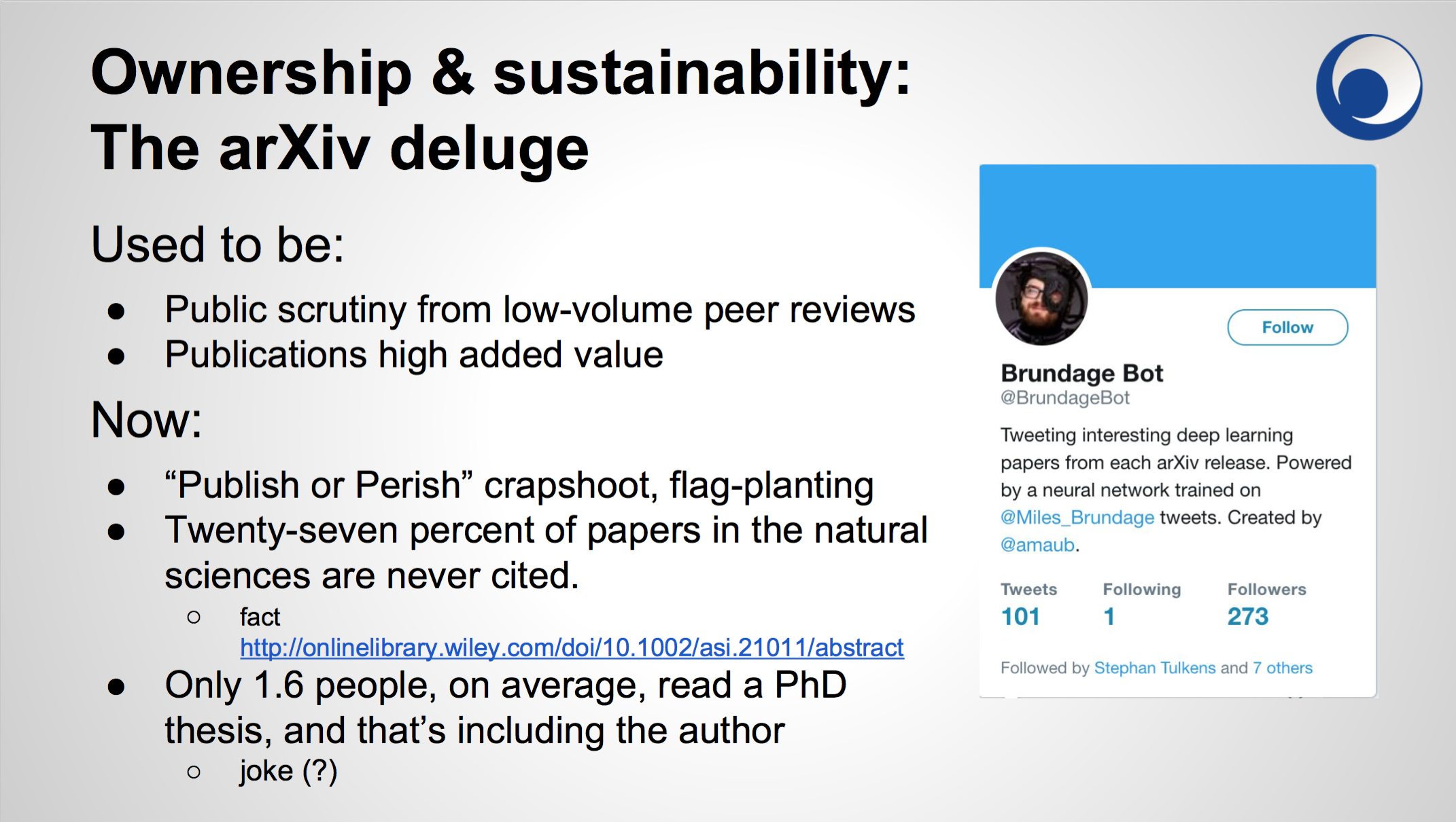

The bridge of ownership and sustainability: the crapshoot of publish-or-perish on irrelevant “standard benchmark tasks”. The sad fire-and-forget funding cycles in academia. The lack of real-world feedback (“entropy injection”) when it comes to defining worthwhile problems and datasets from the industry.

The arXiv deluge: flood gates opened by the perverse publish-or-perish incentives. The lack of dog-fooding leaves both industry and academia fumbling in the dark when it comes to “what actually works”.

Solution? The ball is on the side of business with this one, because it cannot really be solved from within academia. Tips include mentoring talented students, teaching basic engineering skills to avoid embarrassing errors, teaching basic collaboration processes, providing problem context and motivating trade-offs for objective functions. Organizing student competitions, meetups and hackathons.

-

The bridge of respect. As someone who’s had the questionable fortune of wearing many hats over the years, from a PhD student to researcher, corporate developer, team leader, to freelancer aka sysop+webdev+accountant+lawyer+marketer+salesman, to a business owner — I’ve met many people across many different walks of life, and can assure you most people are running as hard as they can.

Show respect for other professions, give at least the benefit of the doubt to people wearing the other hats. There’s a good chance their “bullshit” is simply a complex landscape you haven’t had a chance to explore yet.

Industry: Be aware of the Mummy Effect, take SOTA easy. Research: Do a proper error analysis instead of hiding behind aggregate evaluation metrics. Exercise basic sanity checking, sprinkle your ML pipeline with logging and concrete examples that make eyeballing easy. Learn to walk before you fly with rudimentary software engineering.

By the way, I was amazed how much traction this topic of “enough with the deep learning crap, stick your 2% SOTA improvement and try to understand what’s going on instead” got at the conference. Katharine Jarmul had an amazing keynote on day 1 on model interpretability (video here); there was so much overlap that I had to go home and re-do my slides for day 2, missing the conference social event. Thanks Katharine!! :)

Where next?

It certainly feels the zeitgeist is turning. The frustrations around chasing SOTA and fragile mummy-effect results are mounting, especially in the industry. It used to be that a new technique coming out of research was met with joy and eagerness; now it’s more like “Oh Jesus, do I want to open another fragile, flag-planted can of worms”?

I share more details in the video, but it’s still rather TL;DR. Big thanks to PyData Warsaw for offering me this opportunity to vent and let off some steam. It’s hard to communicate issues on multi-disciplinary boundaries, since there are so many stereotypes and preconceptions floating around.

I definitely believe an open, pragmatic discussion is both needed and overdue. If not aligning, then at least articulating the conflicting interests from different perspectives is the only way to move the discussion forward.

RARE Technologies

RARE Technologies