Violent as the title sounds, I’ll be actually benchmarking software packages that realize the nearest-neighbour search in high dimensional vector spaces. Which approach is the fastest, easiest to use, the best? No neighbours got harmed writing this post.

This is a primer on similarity search, first in a series. Feel free to skip to the meatier Part 2 if you’re familiar with the topic.

Oooh, spaces

Feature vectors with high dimensionality come up in applications surprisingly often. Computer vision, recommendation systems, database systems. Arcane as they sound, they’re a convenient method of encoding an observation/document/item/whatever as a flat sequence of numbers, aka a vector. A document magically becomes a sparse vector (a sequence of floats… floats in spaces!). Like in this gensim tutorial. There’s tons of algorithms and math tricks and libraries that make effective use of vectors, and this item-to-vector transformation plugs into that pool conveniently. You can read more about vector spaces elsewhere but let’s move on to search.

Similarity search in high dimensions

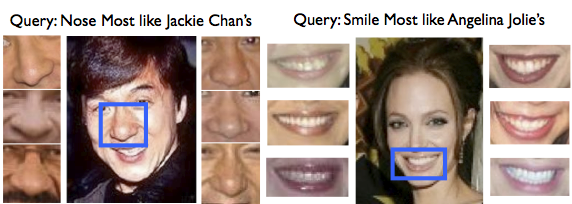

What are the top-10 most similar items to this one? How quickly can you find them? Searching for the “nearest neighbours” (=most similar vectors) to a given item query is a fundamental task in many domains, like information retrieval, bioinformatics, machine learning, data compression, pattern recognition.

When the vectors contain many numbers (“high dimensionality”), a nasty property called the curse of dimensionality kicks in. In short, neat techniques that work wonders in 1D or 2D — like the binary search — get complicated. When “D” becomes hundreds or thousands, fancy partitioning can perform worse than a simple linear scan. Now, a linear scan is a dumb algorithm that visits each item in turn and explicitly computes its similarity to the query, keeping track of the best-N results so far. For a database of 1 million items, that’s one million item-query similarity computations. So if something performs worse than that, it’s pretty sad.

Dodging the Curse, State of the Art

What are these methods used for similarity queries in high dimensional spaces? Facing the curse of dimensionality, direct extensions of low-dim algorithms, like the BSP (binary space partitioning, known from Quake) or the kd-tree don’t work very well. People have been coming up with novel solutions in the past two decades: settling for an approximate answer (get the wrong neighbours, but get them fast), create several randomized trees (for robustness) or clustering the vectors with k-means repeatedly, for a hierarchical indexing structure. A particularly interesting approach is having several methods implemented at once, and deciding which one to use (+ with which parameters) in runtime, depending on the concrete input dataset. This is the approach taken by FLANN, one of the libraries I’ll be benchmarking here.

Gensim contains the brute force aka linear, dumb indexing. Its redeeming quality is that it’s very, very fast (low constant factor in the linear search, down to optimizing for CPU cache sizes), trivial to shard and parallelize and like all dumb scans, has no memory overhead because there’s no extra search structure. I’ve flirted with other algorithms in the past, ones that build more complex indexing structures. But the integration always failed for one reason or another. Usually poor performance… beware of academic big-O promises, where “n” tends to infinity (unlike your data) and constant factors are “irrelevant” (unlike your hardware) :-)

“You cannot sort an array of numbers faster than O(N logN). ‘Tis known.”

What’s next?

I talked to Erik Bernhardsson from Spotify recently, which finally nudged me toward doing a more thorough, practical benchmarking of the various nearest-neighbour (or NN) approaches. For the purpose of music recommendations at Spotify, Erik developed and open sourced a neat little library for NN search, called Annoy. I just had to try it :-)

I’ll be comparing the retrieval performance of Annoy and a few others, on the English Wikipedia. The goal is to see whether it’s time to supplement the similarity search in gensim with something more refined.

You can do some more reading, or continue on to Part 2: The Contestants (or check my twitter for updates if you’re too early).

RARE Technologies

RARE Technologies

Comments 1

Pingback: Radim comments on “Personalized Recommendations at Etsy” – RunWhere.com