If you’ve learned how to train topic models in Gensim, but aren’t able to get satisfying results, then we have a new tutorial that will help you get on the right track on GitHub. Primarily, you will learn some things about pre-processing text data for the LDA model. You will also get some tips about how to set the parameters …

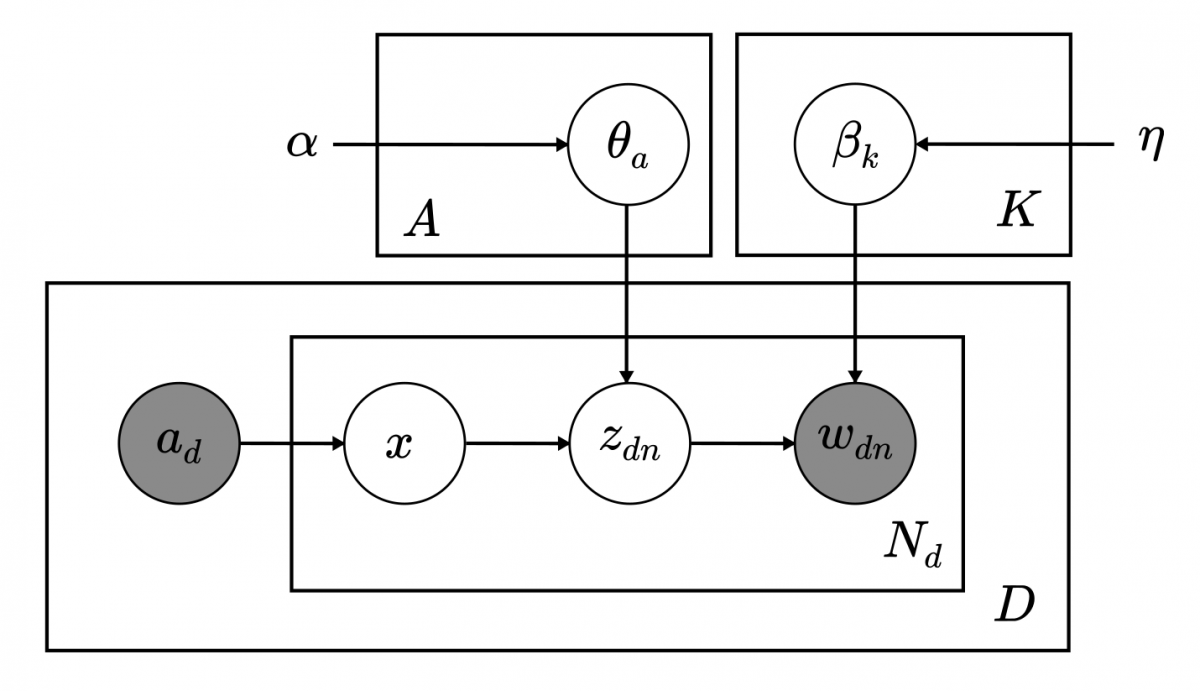

Author-topic models: why I am working on a new implementation

Author-topic models promise to give data scientists a tool to simultaneously gain insight about authorship and content in terms of latent topics. The model is closely related to Latent Dirichlet Allocation (LDA). Basically, each author can be associated with multiple documents, and each document can be attributed to multiple authors. The model learns topic representations for each author, so that …

What is Topic Coherence?

What exactly is this topic coherence pipeline thing? Why is it even important? Moreover, what is the advantage of having this pipeline at all? In this post I will look to answer those questions in an as non-technical language as possible. This is meant for the general reader as much as a technical one so I will try to engage …

2016 Student Data Science Programs with RaRe Technologies

RaRe Technologies is deeply rooted in the open source community and we are always seeking out opportunities to dedicate our experience and time to the next generation of computer scientists. Often the first step is to connect ambitious students to the resources they need to truly make an impact with hands-on projects and mentorship. These up and coming students have …

Text Summarization with Gensim

Text summarization is one of the newest and most exciting fields in NLP, allowing for developers to quickly find meaning and extract key words and phrases from documents. RaRe Technologies’ newest intern, Ólavur Mortensen, walks the user through text summarization features in Gensim.

Making sense of word2vec

One year ago, Tomáš Mikolov (together with his colleagues at Google) made some ripples by releasing word2vec, an unsupervised algorithm for learning the meaning behind words. In this blog post, I’ll evaluate some extensions that have appeared over the past year, including GloVe and matrix factorization via SVD.

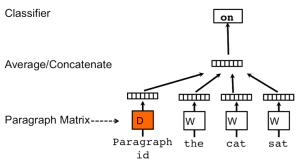

Doc2vec tutorial

The latest gensim release of 0.10.3 has a new class named Doc2Vec. All credit for this class, which is an implementation of Quoc Le & Tomáš Mikolov: “Distributed Representations of Sentences and Documents”, as well as for this tutorial, goes to the illustrious Tim Emerick. Doc2vec (aka paragraph2vec, aka sentence embeddings) modifies the word2vec algorithm to unsupervised learning of continuous …

Data streaming in Python: generators, iterators, iterables

There are tools and concepts in computing that are very powerful but potentially confusing even to advanced users. One such concept is data streaming (aka lazy evaluation), which can be realized neatly and natively in Python. Do you know when and how to use generators, iterators and iterables?

Tutorial on Mallet in Python

MALLET, “MAchine Learning for LanguagE Toolkit” is a brilliant software tool. Unlike gensim, “topic modelling for humans”, which uses Python, MALLET is written in Java and spells “topic modeling” with a single “l”. Dandy.

Word2vec Tutorial

- Page 1 of 2

- 1

- 2

RARE Technologies

RARE Technologies